How I measure racehorse performance across 11 countries and one million races.

I'm about to launch mrworldpool.com — a premium horse racing analytics platform I've built from scratch with plenty of help from AI along the way. This article explains the core of the product: the rating. What it measures, how it works, and the 20-year journey behind it.

I've spent the better part of 20 years building something that most people in my life know surprisingly little about. It started as a hobby when I was seventeen — making speed figures for Scandinavian horse racing with my younger brother — and has grown into Mr. World Pool (mrworldpool.com), a platform that rates racehorses across more than eleven countries using methods I've developed and refined over more than a million race results.

I recently wrote this piece to explain what the ratings actually are, how they work, and where they come from. It's part explainer, part personal history, and part love letter to a craft that has consumed a significant portion of my adult life. If you've ever wondered what I actually do when I say I "work with horse racing data" – this is it.

The original article was posted here, in the learn-section of the new website under articles: https://www.mrworldpool.com/learn

Understanding the Rating

The central problem in handicapping

Thoroughbred racehorses compete across different tracks, distances, surfaces and conditions. The weight they carry changes from race to race. The competition changes. The ground changes. They drift in and out of form like any athlete. Making sense of all this — figuring out how fast a horse actually ran in a given race, relative to everything else — is the central problem in handicapping.

In horse racing, the tools for solving it go by many names. Speed figures, performance ratings, speed ratings, performance figures. For me they are all the same thing — an attempt to quantify and standardise the merit of a performance so that it can be compared against any other performance. How well did this horse run today? A single number that answers that question, accounting for everything we can account for — a number you can compare across tracks, across countries, across surfaces. A 120 at Ascot and a 120 at Sha Tin and a 120 at Meydan mean the same thing.

Getting there is harder than it sounds. Especially when raw times can vary by ten seconds from one day to the next on the same track, depending on how much moisture is in the ground. This is the story of how we got there.

A Brief History of Speed Figures

The first published work on performance ratings dates back to 1936, when E.W. Donaldson released "Consistent Handicapping Profits." With it, he laid the foundation for a long tradition of competing schools and philosophies in the art of making speed figures — particularly in America.

The breakthrough moment came in 1992, when Andy Beyer's speed figures were adopted by the Daily Racing Form and became available to every horseplayer in the country. The Beyer Speed Figure is elegant in its simplicity. You take the final time for each horse, adjust for track speed using a set of expected par times for each class and distance, and express the result as a number. A first approximation of how fast a horse actually ran — and for millions of horseplayers, a revolution.

Running parallel to Beyer — and in some ways predating him — was Len Ragozin, a Harvard graduate whose career in journalism was cut short by the FBI during the McCarthy era. Ragozin turned to his father Harry's hobby of using mathematics to quantify racehorses. Ragozin understood that factors like riding weight and the extra distance horses cover when racing wide through turns — what the Americans call "ground loss" — could and should be incorporated into the figures. His "Sheets" became a premium product for serious bettors. But Ragozin also had blind spots. His treatment of track speed was, frankly, strange: he assumed that track conditions didn't change during a race day unless there was an obvious cause like a rainstorm, and he'd carry the same track variant across from one day to the next if the weather hadn't changed. Anyone who has worked with ratings knows this is wrong. Track speed changes constantly — with moisture, with maintenance, with the number of hooves that have chewed up the surface.

It was Jerry Brown, a former Ragozin employee, who built on these foundations to create Thoro-Graph — and in many ways, Thoro-Graph remains my closest relative in this world. Brown saw the problems with Ragozin's approach and introduced what I consider the most important innovation in figure making: the idea that the clock alone isn't always enough, and that there are additional data points available — things you already know about the horses in a race — that can help you arrive at a more accurate picture of what actually happened. That insight changed everything for me.

Where I Came From

I've been making performance figures since I was eighteen. I started in Norway, then expanded to Sweden, then Meydan in Dubai, then Denmark. My first love, though, was actually American racing. I discovered the sport through Thoro-Graph and spent years studying Beyer's methodology, Ragozin's philosophy, and every book and article I could find on the subject. I spent thousands of hours on the legendary Thoro-Graph message board — where I stirred more than one controversy and learned that if you can't defend your views under fire, you probably shouldn't hold them. Great times, great banter, and the best education a young figure maker could ask for.

For over ten years, my younger brother and I made figures together. His job was to study the race replays and record the position of every horse through every turn — the raw data needed to calculate ground loss. My job was to turn that into ratings. We believed, as Thoro-Graph does, that accounting for ground loss was the most accurate way to make figures. One path out from the rail through a turn costs a horse roughly one length of extra distance. That's geometry — or so we believed. The maths is straightforward. But turning it into a fair measure of what a performance was actually worth? That turned out to be much harder.

And then one day, my brother said he couldn't do it anymore. He had other priorities, which was perfectly fair. But by that point, I'd already started questioning whether ground loss was actually making the figures better.

The Ground Loss Problem

Here's what I noticed. You get a lot of bloated figures for horses that race wide. A horse goes four wide through both turns, gets a generous ground loss credit, and suddenly posts an enormous number. Meanwhile, the horse that saved ground on the rail gets no credit — and their figure makes the race look weaker than it was.

The issue is that not all ground loss is real. There's "true" ground loss — sitting outside a leader in a wicked pace, burning energy to keep up while covering more ground. That's costly, and any credit these horses get, they deserve. But there's also "false" ground loss. The pace is slow and everyone is coasting — being a path wide costs you almost nothing. Or worse, the track has a bias that actually favours the outside, and now you're inflating figures for horses that had the best trip.

I saw this problem again and again. The wide runners got huge numbers. The inside runners got punished. And when you tracked the wide runners forward, they rarely replicated those figures. The numbers looked spectacular on paper, but they didn't predict anything. And some horses simply prefer to race wide — they need daylight, they'll go wide from any stall, and they'll do the same thing next time they run well. Ground loss credits for these horses are essentially counting a preference as a penalty.

So when I was left alone with this venture, dropping ground loss was an easy decision. I still note when a horse has raced genuinely wide, and when I sit down to handicap a race, I'll upgrade them in my head. But it's not in the algorithm. And the enormous benefit — the thing that made it all worthwhile — was that without the labour-intensive video work, I could think bigger. I could look at Hong Kong, Japan, Australia, the whole Middle East, all of Europe. That was the best decision I ever made.

How the Ratings Work

I should be upfront: some of this is the secret sauce, and it's going to stay that way. But I can tell you the principles, and I can tell you what makes this approach different.

Time is the starting point — but not the whole story. The first ingredient is always the race time. But a raw time means almost nothing on its own. A fast time at Epsom is a completely different thing from a fast time at Ascot. So the system works from carefully calibrated standard times for every track, distance, and surface — built up over years and constantly refined as the database grows. Just as important as the winning time itself are the distances between horses at the finish — they tell you about the strength of each performance relative to the others on the day.

Track speed is everything. The same track on Monday and Wednesday can be seconds different, even if the official going description hasn't changed. Much of what makes this method unique is how I calculate track speed. The system looks at each race day as a whole and identifies tendencies across all the races — because while individual horses can produce extreme performances on any given day, it's unusual for an entire field to collectively perform well above or below normal. That variance calculation is the backbone of the rating.

Riding weight is baked in. A horse carrying 60kg that runs the same time as a horse carrying 54kg has run a better race. The relationship between weight and performance is well-established through decades of research, but the precise effect varies with distance, surface, and conditions, so I won't reduce it to a single number here.

Turf and dirt are completely separate. This is a deliberate choice I've made — to treat turf form and dirt form as entirely different things. A turf rating can never influence a dirt rating, and vice versa. They are essentially different sports. For practical purposes, I've also chosen to group all dirt tracks and different all-weather tracks under one umbrella. I know these surfaces have their own nuances. But the data supports the grouping, and it keeps things clean.

Every horse in a race gets the same adjustment. This is a principle I will never break. If you want to adjust one horse up by two points, you must adjust every horse in that race up by two points. This means the process is always about finding the best fit for the entire race — not about making individual horses look right. You use every available data point: the times, the track speed, the weight, and — critically — what you know about these horses from their previous performances. The goal is to arrive at the set of figures that most accurately represents what actually happened, given everything you know.

This last part is what separates these ratings from simpler approaches. Some figure makers use only the clock and a track variant. Some use par times. Some use wind data. Some break out races, others never do. They all believe their method is the best. The truth is that every method has flaws. My approach has been to find the method that is the most robust across the widest range of circumstances — across different tracks, different countries, different racing cultures. Some things I can talk about. Some things I can't. But I wouldn't trade my ratings for anyone's. That's the bottom line.

On Peaks and Philosophy

Much of figure making comes down to deciding when to accept new peaks, and how rigid to be in your methodology. Some figure makers are famously inflexible — they trust their calculations absolutely and will print enormous career bests if that's where the numbers land, even when half the field shows peaks on the same afternoon. Ragozin was known for this. The philosophy sounds principled, but in practice it often means accepting results that don't make sense rather than questioning whether something upstream went wrong.

I'm more practical, and probably more conservative than most. When I see a race where the figures suggest that several horses all ran career bests simultaneously, I'm more inclined to look for an explanation — track speed, pace bias, conditions — than to accept six breakthroughs on the same card. In my experience, there are more legitimate reasons for off performances than there are for peak performances. A horse can underperform for dozens of reasons: a bad trip, wrong ground, too much weight, illness, a poor ride, wrong distance, bad luck in running. A horse that genuinely outperforms its established level? That's rarer, and it needs to be earned.

This philosophy produces ratings that are predictive and practical in use. The spectacular peaks that never get repeated are not useful if what you're trying to do is figure out what a horse will run next week. And since the historical ratings serve as data points for everything that comes after — the form, the trends, the models — keeping them honest matters.

The Algorithm and the Scale

After hundreds of iterations, I arrived at an algorithm that both works well and scales well across different racing jurisdictions. It's impossible to make ratings for over a million races the old-school way — by hand, one race day at a time — so the algorithm had to be robust enough to handle everything from a Tuesday handicap at Wolverhampton to the Dubai World Cup. I still flag quite a few races for manual review, but the production runs well, and the quality improves every year.

The system now covers eleven countries: Great Britain, Ireland, Hong Kong, the UAE, Norway, Sweden, Denmark, France, Germany, Japan, and Australia — along with group races from around the world.

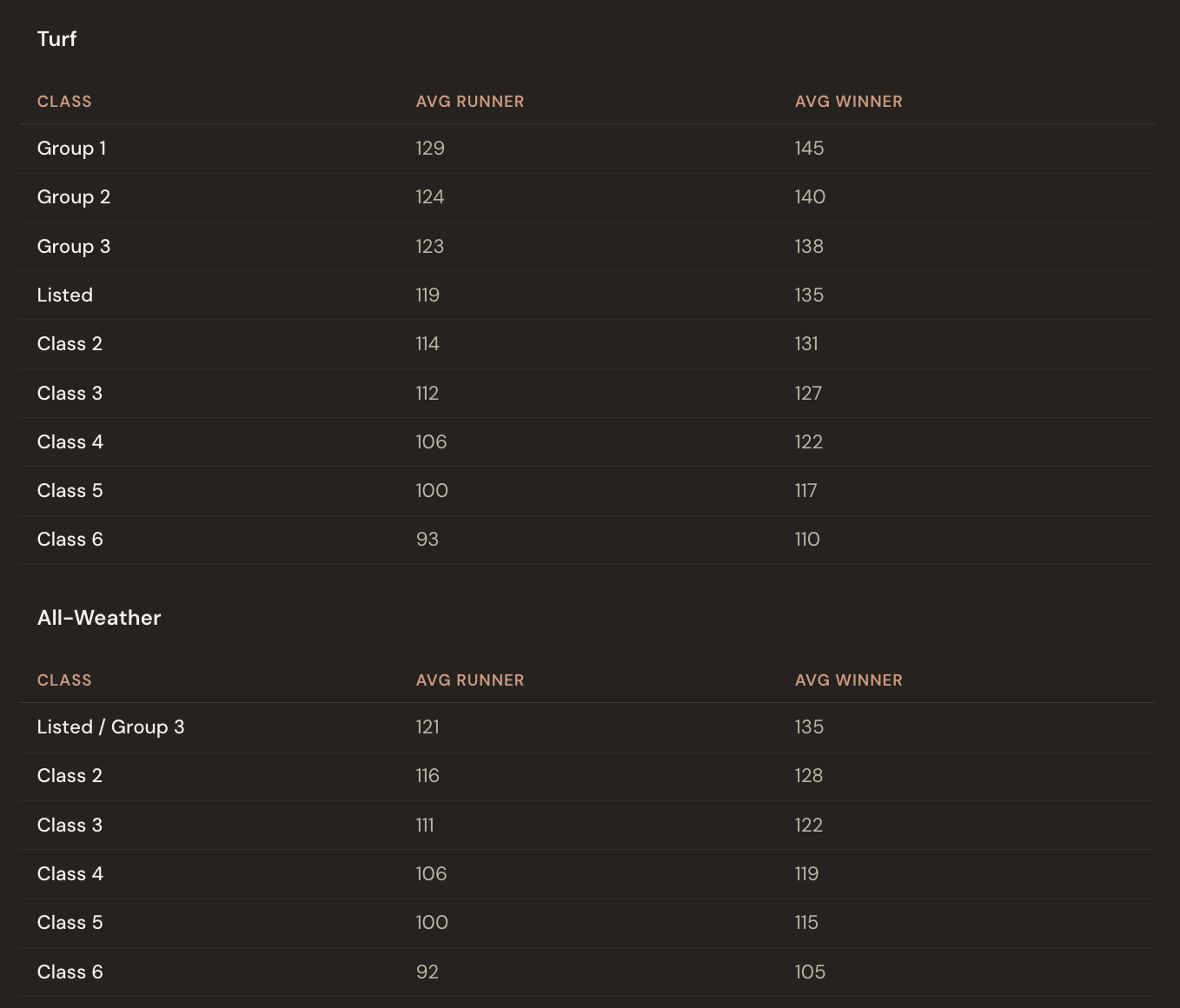

To give you a concrete sense of the scale, here's what the numbers look like in practice. This data is from UK and Irish racing, 2023 onwards — thousands of races across all classes:

A typical Class 5 runner averages around 100 — that's roughly the middle of the road. A Group 1 winner averages 145, and the very best performances push past 155. At the bottom of the scale, the weakest races in Scandinavian racing might produce winning ratings in the low 80s.

Using the Ratings

The Handicapper

The ratings come alive in the Handicapper section of each racecard on mrworldpool.com. For each horse, the Expected Rating module translates past performances into projected beaten distances. You don't just see that Horse A is rated 118 and Horse B is rated 125 — you see, visually, that B is expected to finish roughly three and a half lengths clear, all else being equal.

The whole exercise becomes about one question: what does this horse run today? You look at the form. The conditions. The trainer pattern, the jockey booking, the surface, the trip. You form a view — maybe you think Horse A will improve three points on its last run, maybe you think Horse C will bounce after a big effort. Then you check: if they run to that figure, are they competitive? The Handicapper shows you instantly.

Over time, this is how you develop a feel for what the numbers mean. You start to know what 110 looks like, what 125 looks like, what it takes to win at a given level on a given day. The numbers stop being abstract and start being a language you speak.

Under the Hood — The Expected Rating Model

Behind the Handicapper sits a machine learning model trained on over one million race results. It combines nearly 400 data points per horse — recent form, track and surface preferences, going and distance aptitude, trainer and jockey statistics, gate position and more — to predict the most likely performance today. Because horses naturally regress from peak figures, the Expected Rating will often appear lower than recent best form. This is statistically correct, not a flaw. The key value lies in the relative differences between horses in a race — these rankings provide a strong analytical starting point.

The handicapper's edge comes from identifying which horses are likely to run to or above their best — which is often what winning a race demands, regardless of class. The model sets the baseline. Your judgement determines who beats it.

A Note on Ground Loss

Even though ground loss isn't in the algorithm, it's worth being aware of. If a horse raced genuinely wide — not the cheap kind where the pace was slow and it cost nothing, but truly wide in a strongly-run race — upgrade them a bit in your head. If you think they'll end up wide again today, factor that in. Look at the gate draw. Check the track statistics. Look for the wide-running flags in the form.

The old rule of thumb is roughly one length per path out from the rail per turn. It adds up.

The rating gives you the foundation. Your judgement builds on top of it.

The rating system has been developed over 20 years across more than 11 countries and more than one million race results. It is the foundation of everything on Mr. World Pool — explore the full product at mrworldpool.com.